(Tutorial for beginners)

My YouTube video on this!

In this tutorial, we will be training a custom object detector for mask detection using YOLOv4-tiny and Darknet on our Windows system

FOLLOW THESE 10 STEPS TO TRAIN AN OBJECT DETECTOR USING YOLOv4-tiny

( But first ✅Subscribe to my YouTube channel 👉🏻 https://bit.ly/3Ap3sdi 😁😜)

- Create yolov4-tiny and training folders on your Desktop

- Open a command prompt and navigate to the “yolov4-tiny” folder

- Create and copy the darknet.exe file

- Create & copy the files we need for training ( i.e. “obj” dataset, “yolov4-tiny-custom.cfg”, “obj.data”, “obj.names” and “process.py” ) to your yolov4-tiny dir

- Copy the files “yolov4-tiny-custom.cfg”, “obj.data”, “obj.names”, and “process.py” and the “obj” dataset folder from the yolov4-tiny directory to the darknet directory

- Run the process.py python script to create the train.txt & test.txt files

- Download the pre-trained YOLOv4-tiny weights

- Train the detector

- Check performance

- Test your custom Object Detector

~~~~~~~~~~~~~~ LET’S BEGIN !! ~~~~~~~~~~~~~~

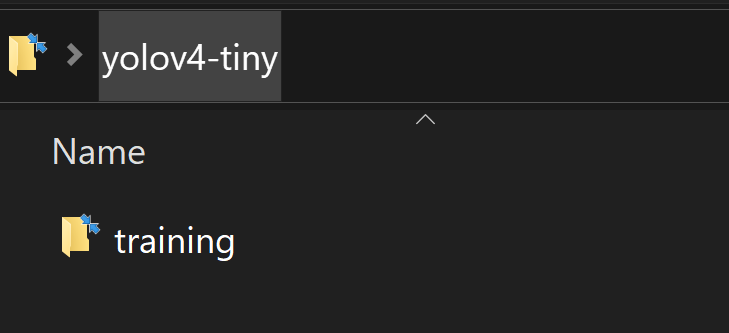

1) Create ‘yolov4-tiny’ and ‘training’ folders

Create a folder named yolov4-tiny on your Desktop. Next, create another folder named training inside the yolov4-tiny folder. This is where we will save our trained weights (This path is mentioned in the obj.data file which we will upload later)

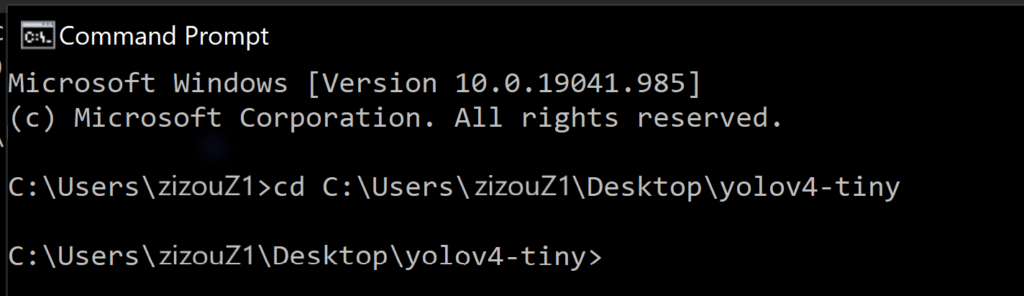

2) Open command prompt and navigate to the “yolov4-tiny” folder

Navigate to C:\Users\zizou\Desktop\yolov4-tiny folder.

NOTE: Replace zizou here with your Username

cd C:\Users\zizou\Desktop\yolov4-tiny

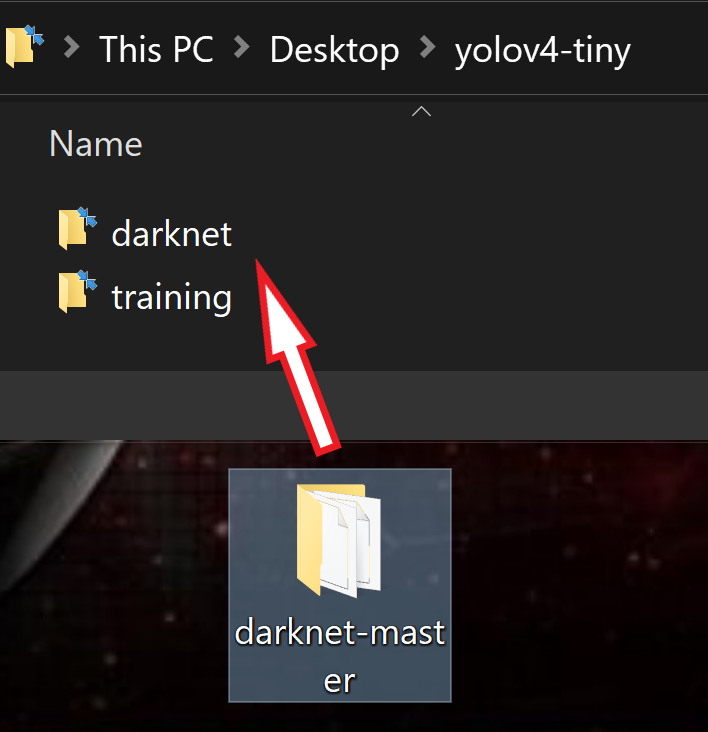

3) Create and copy the darknet.exe file

Create and copy your darknet folder containing the darknet.exe file into the yolov4-tiny folder. To know how to create the darknet folder containing the darknet.exe file using CMake, go to this blog. Follow all the steps there for YOLO installation on Windows.

This step can be a little tedious but this is the difficult part of this entire process. The rest of the training process here below is fairly simple. So, make sure you follow all the steps carefully in the above-mentioned article to create darknet and also have CUDA and cuDNN installed and configured on your system. You’ll find all the steps explained in detail in section A of the above-mentioned blog. You can also check out my video on YOLO installation for Windows at the bottom under the YouTube Videos section of the above-mentioned blog.

Once the above process is done, copy the darknet-master folder we get from it and copy it to the yolov4-tiny folder on your desktop. Rename the darknet-master folder to darknet for simplicity.

4) Create & copy the following files which we need for training a custom detector

a. Labeled Custom Dataset

b. Custom cfg file

c. obj.data and obj.names files

d. process.py file (to create train.txt and test.txt files for training)

I have uploaded my custom files for mask detection on my GitHub. I am working with 2 classes i.e. “with_mask” and “without_mask”.

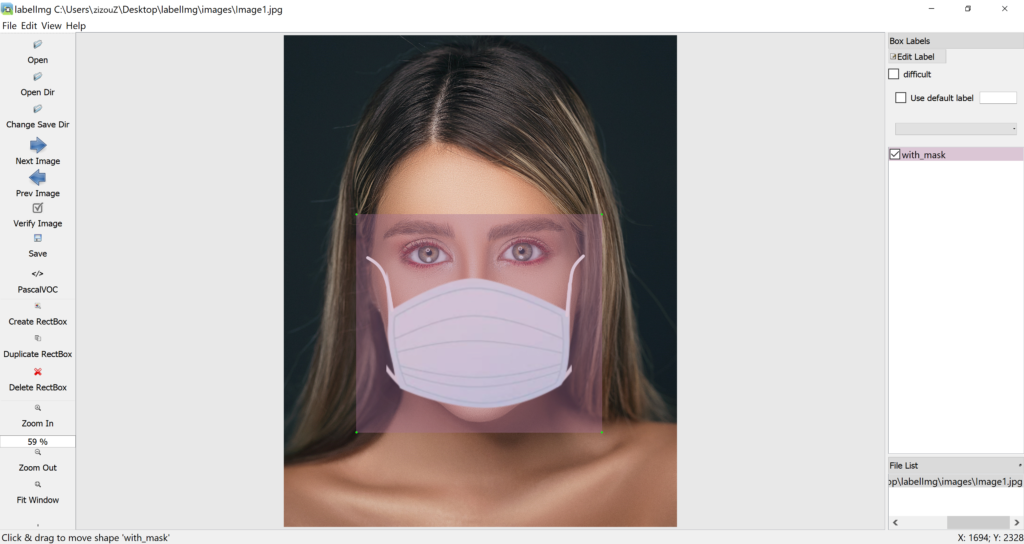

Labeling your Dataset

Input image example (Image1.jpg)

You can use any software for labeling like the labelImg tool.

I use an open-source labeling tool called OpenLabeling with a very simple UI.

Click on the link below to know more about the labeling process and other software for it:

Image Dataset Labeling article

NOTE: Garbage In = Garbage Out. Choosing and labeling images is the most important part. Try to find good-quality images. The quality of the data goes a long way toward determining the quality of the result.

The output YOLO format labeled file looks like as shown below.

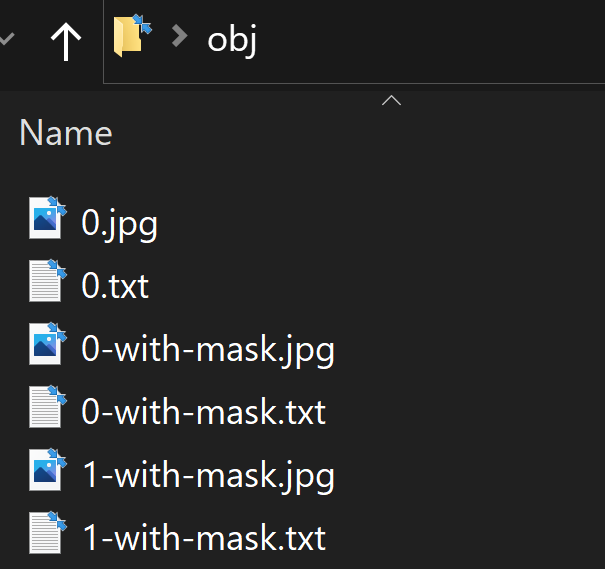

4(a) Create and copy the labeled custom dataset “obj” folder to the “yolov4-tiny” folder

Put all the input image “.jpg” files and their corresponding YOLO format labeled “.txt” files in a folder named obj.

Copy it to the yolov4-tiny folder.

4(b) Create your custom config file and copy it to the ‘yolov4-tiny’ folder

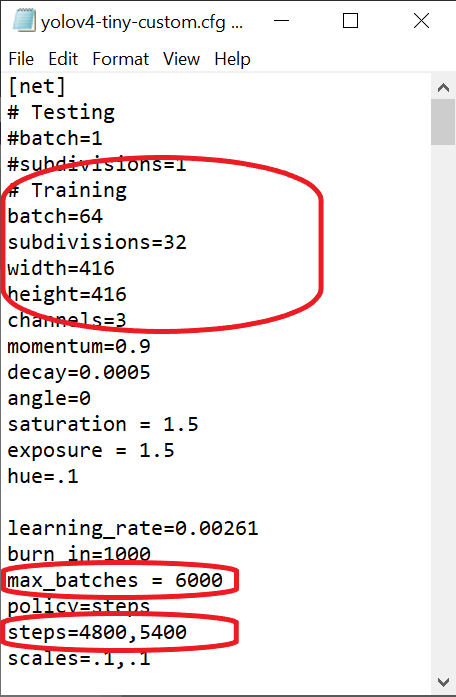

Download the yolov4-tiny-custom.cfg file from darknet/cfg directory, make changes to it, and copy it to the yolov4-tiny dir.

You can also download the custom config files from the official AlexeyAB Github.

You need to make the following changes in your custom config file:

- change line batch to batch=64

- change line subdivisions to subdivisions=16 or subdivisions=32 or subdivisions=64 (Read Note after this section for more info on these values)

- set network size width=416 height=416 or any value multiple of 32

- change line max_batches to (classes*2000 but not less than the number of training images and not less than 6000), f.e. max_batches=6000 if you train for 3 classes

- change line steps to 80% and 90% of max_batches, f.e. steps=4800,5400

- change [filters=255] to filters=(classes + 5)x3 in the 2 [convolutional] before each [yolo] layer, keep in mind that it only has to be the last [convolutional] before each of the [yolo] layers.

- change line classes=80 to your number of objects in each of 2 [yolo]-layers.

So if classes=1 then it should be filters=18. If classes=2 then write filters=21.

You can tweak other parameter values too like the learning rate, angle, saturation, exposure, and hue once you’ve understood how the basics of the training process work. For beginners, the above changes should suffice.

NOTE: What are subdivisions?

- It is the number of many mini-batches we split our batch into.

- Batch=64 -> loading 64 images for one iteration.

- Subdivision=8 -> Split batch into 8 mini-batches so 64/8 = 8 images per mini-batch and these 8 images are sent for processing. This process will be performed 8 times until the batch is completed and a new iteration will start with 64 new images.

- If you are using a GPU with low memory, set a higher value for subdivisions ( 32 or 64). This will obviously take longer to train since we are reducing the number of images being loaded and also the number of mini-batches.

- If you have a GPU with high memory, set a lower value for subdivisions (16 or 8). This will speed up the training process as this loads more images per iteration.

4(c) Create your “obj.data” and “obj.names” files and copy them to the yolov4-tiny folder

obj.data

The obj.data file has :

- The number of classes.

- The path to train.txt and test.txt files that we will create later.

- The path to “obj.names” file which contains the names of the classes.

- The path to the training folder where the training weights will be saved.

classes = 2

train = data/train.txt

valid = data/test.txt

names = data/obj.names

backup = ../training

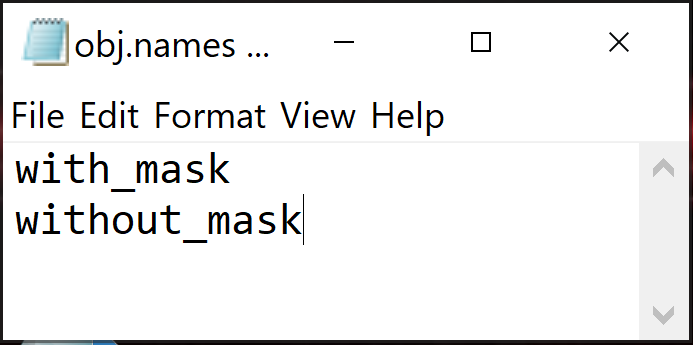

obj.names

Has objects’ names — each in a new line. Make sure the classes are in the same order as in the class_list.txt file used while labeling the images so the index id of every class is the same as mentioned in the labeled YOLO text files.

4(d) Copy the <process.py> script file to your yolov4-tiny folder

(To divide all image files into 2 parts. 90% for train and 10% for test)

This process.py script creates the files train.txt & test.txt where the train.txt file has paths to 90% of the images and test.txt has paths to 10% of the images.

You can download the process.py script from my GitHub.

**IMPORTANT: The “process.py” script has only the “.jpg” format written in it, so other formats such as “.png”,“.jpeg”, or even “.JPG”(in capitals) won’t be recognized. If you are using any other format, make changes in the process.py script accordingly.

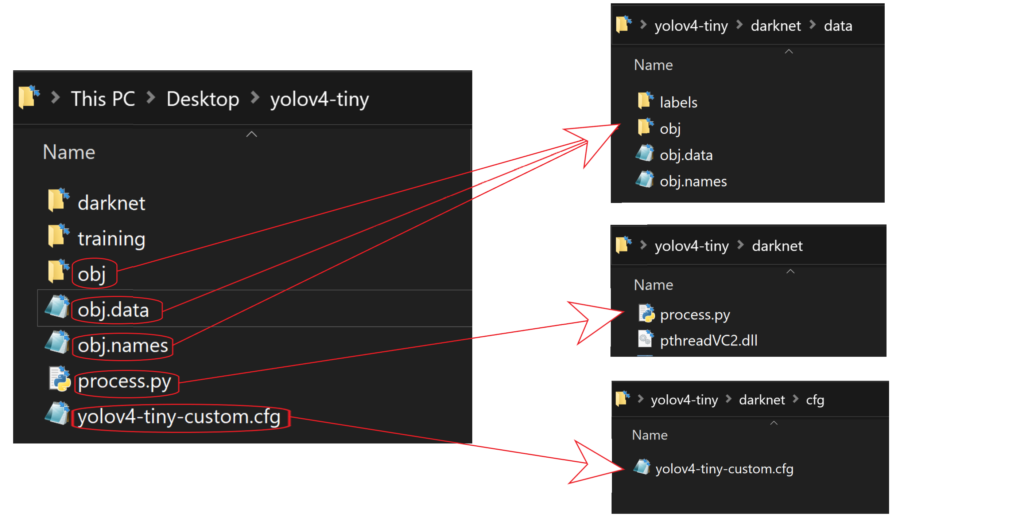

Now that we have uploaded all the files, our yolov4-tiny folder on our desktop should look like this:

5) Copy all the files from the ‘yolov4-tiny' directory to the ‘yolov4-tiny/darknet’ directory

You can do all these steps manually through File Explorer.

First, clean the data and cfg folders except for the labels folder inside the data folder which is required for writing label names on the detection boxes. So just remove all the files from the data folder except the labels folder and next, entirely clean the cfg folder as we already have our custom config file in the yolov4-tiny main folder.

Next, copy all the files:

- Copy yolov4-tiny-custom.cfg to the darknet/cfg directory.

- Copy obj.names, obj.data and the obj folder to the darknet/data directory.

- Copy process.py to the darknet directory.

OR

Do it using the command prompt with the following commands. The current working directory is C:\Users\zizou\Desktop\yolov4-tiny\darknet

Cleaning data and cfg folders.

#change dir to data folder and clean it. Delete all files and folders except the labels folder

cd data/

FOR %I IN (*) DO IF NOT %I == labels DEL %I

cd ..

#delete the cfg dir

rmdir /s cfg

#Are you sure (Y/N)? press y and enter

#create a new cfg folder

mkdir cfg5(a) Copy the obj folder so that it is now in /darknet/data/ folder

xcopy ..\obj data\obj

# In the following prompt, press D for Directory** In the prompt, Press D for Directory

5(b) Copy your yolov4-tiny-custom.cfg file so that it is now in /darknet/cfg/ folder

copy ..\yolov4-tiny-custom.cfg cfg5(c) Copy the obj.names and obj.data files so that they are now in /darknet/data/ folder

copy ..\obj.names data

copy ..\obj.data data5(d) Copy the process.py file into the current darknet directory

copy ..\process.py .6) Run the process.py python script to create the train.txt & test.txt files inside the data folder

The current working directory is C:\Users\zizou\Desktop\yolov4-tiny\darknet

python process.pyList the contents of the data folder to check if the train.txt & test.txt files have been created.

dir data

#OR

ls dataThe above process.py script creates the two files train.txt and test.txt where train.txt has paths to 90% of the images and test.txt has paths to 10% of the images. These files look as shown below.

7) Download and copy the pre-trained YOLOv4-tiny weights to the “yolov4-tiny\darknet” directory.

The current working directory is C:\Users\zizou\Desktop\yolov4-tiny\darknet

Here we use transfer learning. Instead of training a model from scratch, we use pre-trained YOLOv4-tiny weights which have been trained up to 29 convolutional layers. Download the YOLOv4-tiny pre-trained weights file from here and copy it to your darknet folder.

yolov4-tiny.conv.29 pre-trained weights file

8) Training

Train your custom detector

The current working directory is C:\Users\zizou\Desktop\yolov4-tiny\darknet

For best results, you should stop the training when the average loss is less than 0.05 if possible or at least constantly below 0.3, else train the model until the average loss does not show any significant change for a while.

darknet.exe detector train data/obj.data cfg/yolov4-tiny-custom.cfg yolov4-tiny.conv.29 -dont_show -mapThe map parameter here gives us the Mean Average Precision. The higher the mAP the better it is for object detection. You can remove the -dont_show parameter to see the progress chart of mAP-loss against iterations.

You can visit the official AlexeyAB Github page which gives a detailed explanation on when to stop training. Click on the link below to jump to that section.

AlexeyAB GitHub When to stop training

To restart your training (In case the training does not finish and the training process gets interrupted)

If the training process gets interrupted or stops for some reason, you don’t have to start training your model from scratch again. You can restart training from where you left off. Use the weights that were saved last. The weights are saved every 100 iterations as yolov4-tiny-custom_last.weights in the training folder inside the yolov4-tiny dir. (The path we gave as backup in “obj.data” file).

So to restart training run the following command:

darknet.exe detector train data/obj.data cfg/yolov4-tiny-custom.cfg ../training/yolov4-tiny-custom_last.weights -dont_show -map9) Check performance

Check the training chart

You can check the performance of all the trained weights by looking at the chart.png file. However, the chart.png file only shows results if the training does not get interrupted i.e. if you do not get disconnected or lose your session. If you restart training from a saved point, this will not work.

Open the chart.png file in the darknet directory.

If this does not work, there are other methods to check your performance. One of them is by checking the mAP of the trained weights.

Check mAP (mean average precision)

You can check mAP for all the weights saved every 1000 iterations for eg:- yolov4-tiny-custom_4000.weights, yolov4-tiny-custom_5000.weights, yolov4-tiny-custom_6000.weights, and so on. This way you can find out which weights file gives you the best result. The higher the mAP the better it is.

Run the following command to check the mAP for a particular saved weights file where xxxx is the iteration number for it.(eg:- 4000,5000,6000,…)

darknet.exe detector map data/obj.data cfg/yolov4-tiny-custom.cfg ../training/yolov4-tiny-custom_xxxx.weights -points 010) Test your custom Object Detector

Make changes to your custom config file to set it to test mode

- change line batch to batch=1

- change line subdivisions to subdivisions=1

You can do it either manually or by simply running the code below

cd cfg

sed -i 's/batch=64/batch=1/' yolov4-tiny-custom.cfg

sed -i 's/subdivisions=32/subdivisions=1/' yolov4-tiny-custom.cfg

cd ..NOTE: Set the first value of subdivisions to 16 / 32 / 64 above in the command above based on whatever you have set it in the cfg file. Also, to use “sed” or other Unix commands in Windows, you need to install them either using Cygwin or GnuWin32.

Run detector on an image

Upload an image to a folder named mask_test_images on your desktop.

Run your custom detector on an image with this command. (The thresh flag sets the minimum accuracy required for object detection)

darknet.exe detector test data/obj.data cfg/yolov4-tiny-custom.cfg ../training/yolov4-tiny-custom_best.weights ../../mask_test_images/image1.jpg -thresh 0.3 The output for this is saved as predictions.jpg inside the darknet folder. You can copy it to any output folder and save it with a different name. I am saving it as output_image.jpg inside the mask_test_images folder itself.

copy predictions.jpg ..\..\mask_test_images\output_image.jpg

Run detector on a video

Upload a video to a folder named mask_test_videos on your desktop.

Run your custom detector on a video with this command. (The thresh flag sets the minimum accuracy required for object detection). This saves the output video inside the same folder as “output.avi“.

darknet.exe detector demo data/obj.data cfg/yolov4-tiny-custom.cfg ../training/yolov4-tiny-custom_best.weights ../../mask_test_videos/test.mp4 -thresh 0.5 -i 0 -out_filename ../../mask_test_videos/output.avi

Run detector on a live webcam

Run the code below.

darknet.exe detector demo data/obj.data cfg/yolov4-tiny-custom.cfg ../training/yolov4-tiny-custom_best.weights -thresh 0.5

NOTE:

The dataset I have collected for mask detection contains mostly close-up images. For more long-shot images you can search online. There are many sites where you can download labeled and unlabeled datasets. I have given a few links at the bottom under Dataset Sources. I have also given a few links for mask datasets. Some of them have more than 10,000 images.

Though there are certain tweaks and changes we can make to our training config file or add more images to the dataset for every type of object class through augmentation, we have to be careful so that it does not cause overfitting which affects the accuracy of the model.

For beginners, you can start simply by using the config file I have uploaded on my GitHub. I have also uploaded my mask images dataset along with the YOLO format labeled text files, which although might not be the best but will give you a good start on how to train your own custom detector model using YOLO. You can find a labeled dataset of better quality or an unlabeled dataset and label it yourself later.

GitHub link

I have uploaded all the files needed for training a custom YOLOv4-tiny detector in Windows on my GitHub link below.

yolov4-tiny-custom-training_LOCAL_MACHINE

Labeled Dataset (obj.zip)

CREDITS

References

Dataset Sources

You can download datasets for many objects from the sites mentioned below. These sites also contain images of many classes of objects along with their annotations/labels in multiple formats such as the YOLO_DARKNET text files and the PASCAL_VOC XML files.

Mask Dataset Sources

I have used these 3 datasets for my labeled dataset:

More Mask Datasets

- Prasoonkottarathil Kaggle (20000 images)

- Ashishjangra27 Kaggle (12000 images )

- Andrewmvd Kaggle

Video Sources